Spec-driven development starts one step too late

Spec-driven development is a good idea. GitHub Spec Kit and AWS Kiro are good tools. Neither of them helps you with the hard part.

That's not a criticism. It's a structural observation. SDD - the practice of giving coding agents precise, machine-readable specifications instead of freeform prompts - addresses a real problem. AI coding agents execute on whatever they're given. Vague input produces plausible-looking code that does the wrong thing. A structured spec reduces the distance between what you meant and what gets built.

That works. Right up until you ask where the spec came from.

SDD is solving a real problem

GitHub Spec Kit and AWS Kiro are serious tools. They are not hype. They reflect a genuine insight: if you want a coding agent to build the right thing, you need to give it something more precise than "add a settings page."

Spec Kit formalizes the specification as a project artifact - a first-class document that the agent reads, interprets, and reasons against before writing a line of code. Kiro extends this further, introducing a structured workflow where requirements, design, and task decomposition happen in sequence before implementation begins. Both tools are responses to the same observation: most teams treat the spec as an afterthought. SDD makes it the starting point.

This is the right instinct. The problem is upstream of where they start.

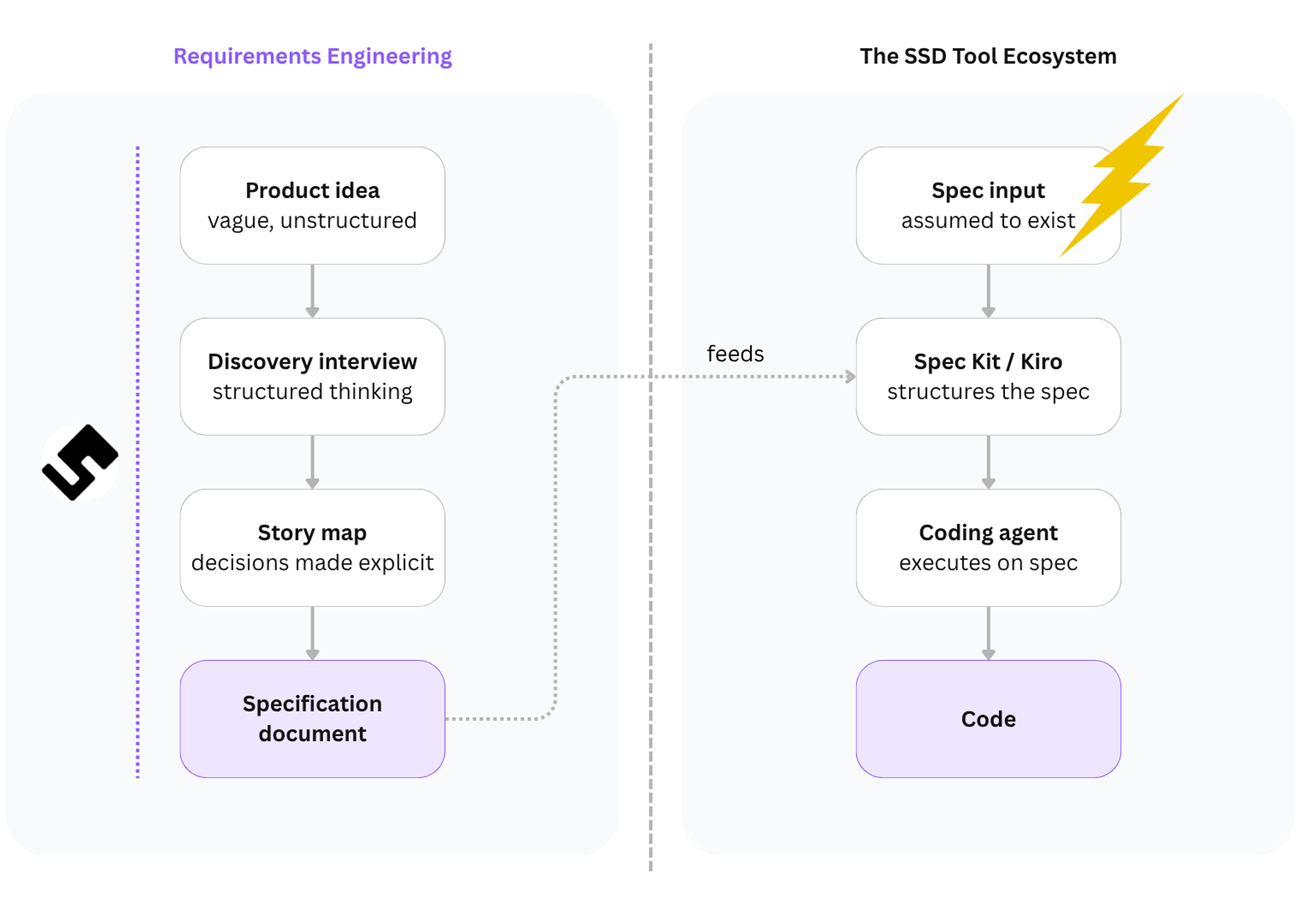

Every tool starts at step two

Look at where any SDD tool begins its workflow.

There is a spec file. The spec file contains a description of what needs to be built. The tool reads it, structures it, and hands it to a coding agent. The agent builds it.

That chain is clean. The question nobody asks: how did the spec file get its content?

The answer, in most real teams, is one of three things. Someone wrote it from memory during a planning session. Someone prompted ChatGPT and cleaned up the output. Someone turned a vague Slack message from a product manager into a paragraph that felt specific enough.

These are not the same as a spec that came out of structured thinking. They produce documents that look like specifications. They have headings. They have bullet points. They use the right vocabulary. And then the coding agent executes on them faithfully - building, with precision, something that was never quite right to begin with.

SDD shifts the problem one layer upstream. It does not solve it.

Birgitta Böckeler at Thoughtworks spent real time with these tools and came away asking exactly this. Is SDD even suited to large features that are still unclear? Doesn't that require specialist product and requirements skills - stakeholder involvement, structured research - that appear nowhere in these workflows? None of it is in Spec Kit or Kiro. It's assumed to have already happened.

The SDD tool ecosystem handles everything on the right. The input problem lives entirely on the left - and no current SDD tool touches it.

A spec is not a prompt

There is a version of this problem that sounds trivial: just write a better spec.

It isn't trivial. A spec is not a more detailed prompt. It's what you get after a series of decisions have been made, challenged, and written down. Who is this for? What happens when the user does the unexpected thing? Those aren't questions you answer by thinking harder while you type. You answer them by talking to someone who knows which questions matter and in what order.

Some SDD tools do include a human-in-the-loop step. Kiro, for instance, asks you to review the requirements document before the coding agent executes. That's the right instinct. The spec should be human-authored and human-validated.

But which human? In every SDD workflow I've seen, the loop closes with the developer. The developer reviews the requirements. The developer refines the scenarios. The developer signs off. That's one person, usually without the business context that makes a scenario precise.

A spec is not a document. It is the record of a shared understanding. The questions a good spec must answer - who is this for, what does 'done' actually mean, what happens when the user does the unexpected thing - are not questions a single developer can answer alone at a keyboard. They are the product of a conversation between the person who understands the business problem and the person who understands the technical constraints. That conversation is requirements engineering. And it is team-sized, not person-sized.

SDD tools offer HITL - human in the loop. What a real RE process requires is closer to TITL: the team in the loop. The right people having the right conversation before anyone opens a text file.

The difference between a spec that was written from a prompt and a spec that came out of that process is not visible from the outside. Both are Markdown documents. Both have acceptance criteria. The first will produce code that ships wrong on the fifth edge case. The second already accounted for it.

The spec is not documentation. It is communication. No current SDD tool helps a team have the conversation that produces it.

I know what a spec-first build feels like

Speclr - the product I've been building, the product you see here - was developed spec-first from the beginning. Discovery interview before anything was designed. Gherkin before anyone picked a component. A Story Map on the wall before a single line of code. The spec that went to the coding agent was not a prompt I typed. It was the result of real work: every decision written down, every edge case named before the code started.

You could see the difference immediately.

When I handed the coding agent a Gherkin scenario that said:

Scenario: Interview paused after coverage threshold reached Given the user has answered questions covering 80% of product areas

When the AI assesses coverage as sufficient

Then the interview pauses

And the user sees a summary of covered areas

And two options: continue or proceed to planning

...it built exactly that. Not approximately that. Not a version of that with a missing state. That. Because the scenario was the product of a real process - one that had already asked "what does the user see?" and "what are the two paths?" before anyone wrote any code.

When I skipped that process - when I was moving fast and handed the agent a rough description instead - the output looked right and worked wrong. Every time.

The spec quality problem is the original problem

SDD tools are solving the "vague prompt produces vague code" problem. They're right that it's a problem worth solving. What they haven't noticed is that "vague spec produces vague code" is the same problem wearing a different hat.

The spec-to-code layer is getting more disciplined. The idea-to-spec layer is still running on intuition, memory, and optimism.

A different critique of SDD - Kent Beck's, and to a point Fowler's - is about what happens when you learn something mid-build that breaks your spec. The spec should change. That's a real problem. But notice what it assumes: a spec worth updating. Whether your domain knowledge is solid upfront or sharpens as you build, it still has to pass through a requirements engineering process at some point. A good RE process handles both. It doesn't care whether you figured things out in a workshop or in a sprint. The spec-first versus iterative debate is about timing. The upstream problem is about thinking. They're not the same argument.

That's where the actual work lives. Not in how you pass requirements to an agent - in how you figure out what those requirements should be. The conversation where someone asks "what happens if the email doesn't exist?" before anyone writes code, not during the sprint where the answer costs a week.

Every hour you spend clarifying a requirement before the spec is written saves you from a sprint of fixing code that did exactly what you asked. SDD is a powerful lever. But a lever only moves what it's attached to.

The tools point right. The problem is on the left.